A perl utility to improve exadata cellcli statistics

Sunday, July 31, 2011 at 9:10PM

Sunday, July 31, 2011 at 9:10PM I’ve only recently had the opportunity to start experimenting with our relatively new Exadata 1/4 rack. Like most Exadata newbs the most immediately useful new tool is the cellcli utility which lets us get as storage cell utilities. Kerry Osborne’s blog post on top 10 commands is required reading when getting started.

Amongst Cellcli annoyances are:

- You can’t join between METRICDEFINITION and METRICCURRENT or other metric tables. So you can’t easily see the descriptions of the metric values you are viewing.

- CELLCLI only reports the values for the current storage cell, so you can’t get a global view across the cells.

I decided a good first project would be to write a utility that could display the metric definitions and the values together, and which would merge values from all of the storage cells. Since my old friend perl is present on the storage cells, it wasn’t too hard to put together a tool to do this. The script is here.

Setup

You’ll want to have password-less SSH connectivity between cells for this to work. Many of us will be familiar with doing this for RAC, but if you don’t, a quick primer is available at http://blogs.translucentcode.org/mick/archives/000230.html. I wrote this script to partially automate the procedure:

1: # Helper script to setup passwordless SSH

2: if [ $# -eq 0 ]

3: then

4: echo "Usage $0 remoteHost"

5: exit 1

6: fi

7:

8: ssh-keygen -t dsa

9: while [ $# -gt 0 ]; do

10: export remoteHost=$1

11: scp ~/.ssh/id_dsa.pub ${remoteHost}:

12: ssh $remoteHost 'mkdir .ssh; cat ~/id_dsa.pub >>~/.ssh/authorized_keys;chmod 644 ~/.ssh/authorized_keys '

13: shift

14: done

From a database node, run the script with the names of all the storage cells on the command line. I have three cells so I issued the command like this:

[melbourne@exa1db02 ~]$ bash setup_ssh.sh exa1cel01 exa1cel02 exa1cel03

Then you will be prompted with various SSH messages about passphrases, and so on. Just hit return or “y” if prompted, except when asked for passwords. You’ll have to enter the password for each storage cell twice to get everything setup. After that you should be able to ssh to the storage cells without being prompted for a password.

Usage

Running the command with no arguments will generate the following usage message:

[melbourne@exa1db02 ~]$ perl cellcli.pl

Usage perl cellcli.pl [options]

Options:

--hosts -H comma seperated lists of cell nodes

--mask -m print metrics starting with this string

--desc -d print descriptions of metrics

--cells -c print individual cell metrics

--sci -s Use scientific notation

--stats -a Print min/max/avgs

--help -h print thisExample:

perl cellcli -m FC_IO_RQ_R -h exa1cel01,exa1cel02,exa1cel03NB: utility assumes passwordless SSH from this host to the cell nodes

see http://guyharrison.squarespace.com/blog/2011/7/31/aggregating-exadata-cellcli-statistics.html

The only compulsory argument is “—hosts”, which requires a comma-seperated list of the cell nodes. Most of the time you will want to use the “—mask” argument as well, which applies a filter to the metric names. With no other arguments you’ll then get a list of each matching statistic and the sum of values across all the cells:

[melbourne@exa1db02 ~]$ perl cellcli.pl --hosts exa1cel01,exa1cel02,exa1cel03 --mask FC_IO_RQ_R.*

Name Sum

----------------------- ------------

FC_IO_RQ_R 8.55164e+06

FC_IO_RQ_R_MISS 675223

FC_IO_RQ_R_MISS_SEC 0

FC_IO_RQ_R_SEC 0.1

FC_IO_RQ_R_SKIP 1.742e+08

FC_IO_RQ_R_SKIP_SEC 33.6

Note that mask matches Unix regular expressions, not Oracle style “%” wildcards - '.*' matches anything.

The --desc argument adds the description (somewhat truncated) for each metric:

The --cells argument prints the values from each individual cell:

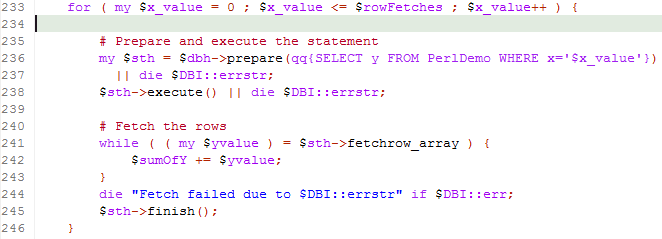

The --stats argument causes min,max and average values to be printed. The average is the average of sums across cells, not the average of all values. SOme statistics will have more than one value in each cell (GD_IO_RQ_W_SM for example) and the average is the average SUM of values across cells.

You can use all the arguments together, but you’re output might be very wide!

Restrictions and caveats

When a metric has multiple object values they are simply summed. That probably works OK for some statistics but maybe not for others. For instance the disk grid IO counters are broken down by the name of the grid disk within the cell:

My utility simply sums all of those values:

Hope you find this of use. Please let me know if you’ve got any suggestions…..